Open Data in the Capitol- aka the anti-PDF and show us your data day.

Today I spent my day sitting in senate and assembly hearing rooms in our (rather beautiful) state capitol to testify in favor of two new bills that would make open data more impacting in California.

It’s a bit of a departure from my normal tech scene, but for the first time there are a slew of bills hitting the state and these are really important to the future of our state.

The first up was SB 272 from Senator Hertzberg, a piece of legislation that requires local governments to conduct and publish an inventory of their data systems and contents. This one is big. I’m calling it the “Show us your data bill”.

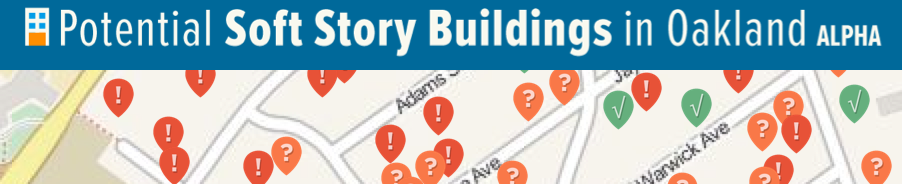

Open data has been used by civic hackers to build countless new apps and to explain data in new ways, from apps that help inform people if they live in earthquake safe homes, to making city budgets understandable for the first time through to tools that help families find early childhood education and services easily, from the convenience of their phone.

SB272 is an important piece of our state infrastructure- while may cities do have open data policies, these take some time to implement and residents are left in the dark about what data their local government actually has, and how it’s collected. This is important from a perspective of trust building for sure- knowing what data our police department collects is vitally important to understanding how that department works. We’ve had impassioned fights in Oakland over data privacy yet most of this debate is happening without really know just how much and what kind of data our city really has.

Data inventories will empower residents and will lead to better quality public records requests- right now, if you don’t know what is collected, you are forced to make vague, uncertain requests, never sure if the data exist. With public data inventories, our communities will be able to make informed requests. This builds trust and improves efficiency.

SB 272 is also important as there is too much opacity in local government contracting; making visible the exact systems and software used in managing these data will provide valuable intelligence to the business community.

Lastly, it is also critical that we develop these inventories as technologists build ever more powerful and useful apps, we run into the issues of these apps stopping at your city border because the data don’t exist or aren’t obviously available in the next city over. Knowing which data exist, and where, is a huge step forward in encouraging future innovation and making modern tools work for all of our residents.

Next up was AB169 from Assemblymember Mainschein, a small piece of legislation that helps define what “open” means and helps to firm up the standards in which data are published, when published. Let’s call this one the “Kill All PDFs” Bill. It requires data or records to be published in machine readable, digital formats in ways that can be searched and indexed- this is good, but unfortunately a PDF can be searched by Google, so maybe this bill isn’t perfect from a geek’s perspective, but it does make data publishing standards much clear and more helpful for those of us using public data. Perhaps as important, this bill requires data to be published in the original structure where possible- putting pressure on government staff to not refactor or redact data files unless legally necessary.

Both bills went through with unanimous support, off to the next stage of political machination. I’m hopeful they will see sunlight in the end, they are important pieces of our future. As this day wraps up, I’m left dwelling on this one thing- every issue heard today had a slew of hired lobbyists to represent the various interests- electric cars, solar systems, government contractors and more; I’ve been frustrated by the lack of movement and leadership at a state level in California regarding open data and now I realize something-

Open Data has no lobbyist.