Spike @spjika : Busy night in @Oakland city hall, hackers working on civic issues for our city w…

Spike @spjika : Busy night in @Oakland city hall, hackers working on civic issues for our city w @openoakland

Spike @spjika : Busy night in @Oakland city hall, hackers working on civic issues for our city w…

Spike @spjika : Busy night in @Oakland city hall, hackers working on civic issues for our city w @openoakland

It’s not often you get to testify to one of your most admired leaders, and to do so side by side with another amazing community leader you’ve respected and appreciated from afar, but today I get to do both of these things and I’m pretty much in awe of how much of a privilege this is. It’s harder and harder to leave my girls at home and travel but for someone deep in the work of making cities better and more equitable at a local level, there are few chances to possibly influence change at a national level. And so I’m in Cincinnati where it’s below freezing, away from sunny California where yesterday I was drinking a good beer on the balcony with my wife complaining it was too warm. In January.

Today I’m testifying to the President’s Task Force on 21st Century Policing, which includes Bryan Stevenson of the Equal Justice Initiative, a man who bought me to tears last time I heard him speak. I don’t go in for hero worship, but Bryan would be on the short list.

What does a data geek and open government advocate have to say about the future of policing reforms in our country? My testimony is below…

Dear members of the task force and other community leaders,

I’m speaking today on behalf of Urban Strategies Council, a 28 year old social justice organization in Oakland, California where I have had the privilege of being the director of research and technology for almost eight and a half years. I am also here on behalf of OpenOakland, a civic innovation organization that I co-founded with Eddie Tejeda.

My work with the council has provided me with an opportunity to see how a lack of transparency in local government affects data-driven decision making. government technology, and community engagement. I’ve had the chance to work with many local agencies and community based organizations to help them unearth their valuable data, to analyse it and put it in context and then to help communicate the story and results of those data.

Traditionally the role of government has been perceived as a collector of data for compliance and reporting purposes, yet this is no longer sufficient in our view of 21st Century government. Government now needs to be pro-active across all agencies, especially those traditionally very closed and inaccessible. For many years we have been unearthing public data for research purposes and publishing these data openly for all to access- from data on local probationer populations, to crime reports and foreclosure filings. When we obtained both open and private data and published a report on the investor acquisitions of foreclosed homes, our work led to the creation of new laws to protect tenants and monitor housing purchases. When public data is put in the hands of communities, powerful things can happen.

We led an effort to crowd source the legislation to make open data the law in Oakland and now we have local agencies actively making data available to the public free of charge or restriction. This has led to breakthrough innovations such as OpenBudgetOakland.org which when shown to our city council led to disbelief- never before had decision makers seen their own budget in such clear context and the impact was powerful. Residents of our city were able to understand a complicated 16,000 line budget for the very first time- something made possible by opening data and by engaging the community in a respectful collaboration. Hackers, city staff and advocates working together.

Another local example of what happens when government opens up valuable data and collaborates includes our earthquake safety app (http:/softstory.openoakland.org) that helps inform low income renters if they are living in a building susceptible to collapse in the next big quake. This app was built by the community as open source and is now being deployed in a nearby city.

You’ll notice I’ve not talked about great policing collaboration examples. For good reason. Despite generating a near real time flow of crime reports, our local police departments and sheriffs have not been eager to jump into the world of open data, yet. Given the lack of trust in the Oakland Police Department, the need for real community policing and a dearth of accessible information about policing practices and incidents, Oakland is like most other US law enforcement agencies in its need to embrace open data, to develop respectful collaborations and engagement that leads to innovation.

Given the way communities of color are impacted by crime and violence, and the number of officer- involved shootings and assaults on officers, there is a very real and urgent opportunity for data to be leveraged for their benefit. Right now there are activist groups building databases of all officer- involved incidents and homicides;, these are duplicated efforts costing hundreds of hours of community time from projects such as Oakland’s Shine in Peace to http://killedbypolice.net/ and http://www.fatalencounters.org/. These projects should be taken as a leading indicator of a huge and growing demand for better transparency in our law enforcement agencies – citizens are clamouring for data to inform decision making, policy reform and civic action. When communities across the country need to collect news reports of officer- involved shootings and homicides, we’re missing something. When stop and frisk data are hidden from public view and not available for community research and analysis, we’re missing something. When arrest information only sees sunlight in the form of aggregate yearly reports, we’re missing something. That something will be realized when local law enforcement adopts a policy of open by default and begins publishing (with some obvious legal limitations) record level data of all crime reports, arrests, uses of force and weapon discharges along with stop and frisk incident data.

As individuals we do not trust that which we cannot see. Publishing data alone will not lead to better insights and operations, it is not a silver bullet to restoring community trust in police departments. However, in publishing these data on an ongoing basis, we make possible new, productive collaborations, new opportunities to engage with somewhat objective truths to work from and we allow for innovations that we could not predict. My recommendation to this task force: make open by default the new norm for our police forces, support the open publishing of these data, encourage standard data formats and support these agencies taking a leading role to learn together and to work towards common goals. Toward a future where transparency is no longer a laughable concept when it comes to law enforcement, where communities trust the information coming out of police databases and where residents can see and understand patterns and problems for themselves. Then we can have informed debates and start to remake policing in the USA in ways we agree on.

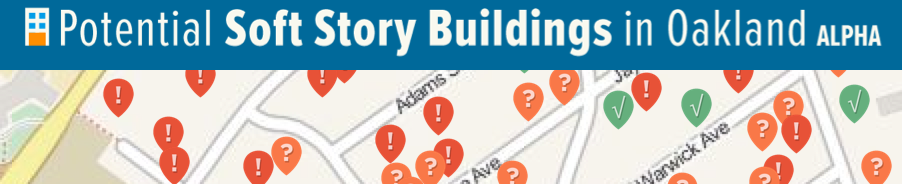

Oakland has almost 610 homes at risk of collapse or serious damage in the next earthquake we will experience! These homes, called “soft-story” buildings are multi-unit, wood-frame, residential buildings with a first story that lacks adequate strength or stiffness to prevent leaning or collapse in an earthquake. These buildings pose a safety risk to tenants and occupants, a financial risk to owners and risk to the recovery of the City and region.

Today we are launching a new OpenOakland web and mobile app that will help inform and prepare Oakland renters and homeowners living in these buildings at risk of earthquake damage. The new app: SoftStory.OpenOakland.org provides Oaklanders with a fast, easy way to see if their home has been evaluated as a potential Soft Story building and is at increased risk in an earthquake.

The stats:

This new app relies on data from a screening of potential soft story buildings undertaken by the City of Oakland and data analyzed by the Association of Bay Area Governments (ABAG). Owners and renters can see if their home is is considered at risk or has already been retrofitted, and learn about the risks to soft-story buildings in a serious earthquake, an event that is once again on people’s minds after last week’s magnitude 6.0 earthquake in Napa.

We’re launching this app as a prototype with short notice as we believe this information is critical for Oaklanders at this time. The app was built with the support of ABAG and the City of Oakland and had technical support provided by Code for America. Once again, where local government is increasingly transparent, where data is open and in open formats, our community can build new tools to help inform and empower residents.

The digital divide is a very real and very stable reality in communities like Oakland, California. Knowing which neighborhoods have solid access to high speed internet is a critical aspect of planning for government and nonprofit provided online services- if we want low income folks from Oakland’s flatlands to use a new digital application, we’d damn sure better know how many households in the target areas likely have decent speed internet hookups at home! Luckily for us the FCC collects reliable data on this and they publish it freely at a local level.

Do yourself a favor and view the fullscreen version: http://cdb.io/1jq8wDq

I took the raw tract level data and joined it to census tracts in QGIS, calculated a new string field called “

If you’d prefer to never see this kind of mess happening Oakland (thanks Berkeley for the great non-example), you should join Oakland Votes and many residents of the city to work on the creation of an independent Redistricting Commission for our city! Details on the flyer below- this will be a ballot measure this November assuming that city council passes it. The meeting is to get community input into the model Oakland chooses to adopt- both California and Austin have done this and we can learn form their efforts! We’ll have good food and you’ll get to play a valuable part in shaping the future of our city, and the future shape of our council maps too!

props to @mollyampersand for the original, distant design elements.

You may not have heard about this yet, which is a shame. It’s a shame because it’s a rare good thing in local government tech, because it’s a serious milestone for our city hall and because our local government isn’t yet facile with telling our community about the awesome things that do happen in Oakland’s city hall. But I’m excited, and I’m impressed that we’ve gotten here- Oakland’s crime report data is now being published daily, automatically, to the web, freely available for all.

This is quite a leap forward to where we were several years ago and in fact just year ago to be honest: spreadsheet hell. Often photocopied spreadsheet hell. Things happen slowly, but some things we’ve pushed for because we recognize their potential to change the game forever. First we pushed for opendata as a policy in the city, and we got there quick enough, but we’re now waiting in expectation for our new CIO Bryan Sastokas to publish the city’s very crucial open data implementation plan. Then we started pushing public records into the open with the excellent RecordTrac app that makes all public information requests public unless related to sensitive crime info. And now with local developers soaking up the public data available we have the first ever Oakland crime feed and an API to boot!

The API isn’t actually something the city built, it’s a significant side benefit of their Socrata data platform- just publish data in close to real time and their system will give you a tidy API to make it discoverable and connectable.You can access their API here:

http://data.oaklandnet.com/resource/ym6k-rx7a.json

If you’re more of an analyst or a journo or a citizen scientist you may want the data in bulk, which you can grab here. That link will get you to a file that is updated on a daily basis- pretty rad huh. Given how crime reports tend to trickle in- some get reported days after, some months, some get adjusted, the data will change over time- the only way to build a complete, perfect dataset is to constantly review for changes, update, replace etc- a very complicated task. If you want a bulk chunk of data covering multiple years, with many richer fields and much higher quality geocoding you can grab what we’ve published at http://newdata.openoakland.org/dataset/crime-reports (covers 2003 to 2013) and for the more recent historic version you can use this file: http://newdata.openoakland.org/dataset/oakland-crime-data-2007-2014

Now that we’ve figured out how to pump crime report data out of the city firewall, we can get to work connecting it to oakland.crimespotting.org and building dashboards to support community groups, city staff and more!

So thank you to the city staff who worked to get this done- now let’s get hacking!

Side bar- Oakland has finally gotten hold of it’s new Twitter handle: @Oakland is now online! More progress…

Oakland is once again talking about data and facts concerning crime, causes and policing practices, except we’re not really. We’re talking about an incredibly thin slice of a big reality, a thin slice that’s not particularly helpful, revealing nor empowering. And this is how we always do it.

Chip Johnson is raising the flag on our lack of a broad discussion about the complexity of policing practices and the involvement of African-Americans in the majority of serious crimes in our city, and on that I say he’s dead right, these are hard conversations and we’ve not really had them openly. The problem is, the data we’re given as the public (and our decision makers have about the same) is not sufficient to plan with, make decisions from nor understand much at all. Once again we’re given a limited set of summary tables that present just tiny nuances of reality and that do not allow for any actual analyses by the public nor by policy makers. And if you believe that internal staff get richer analysis and research to work with you’re largely wrong.

When we assume that a few tables of selectively chosen metrics suffice for public information and justification for decisions or statements, we’re all getting ripped off. And the truth is our city departments (OPD esp.) do not have the capacity for thoughtful analytics and research into complex data problems like these. And this is a real problem. Our city desperately needs applied data capacity, not from outside firms on consultancy (disclosure: my current role does this sometimes for the city) but with built up internal capacity. There is a strong argument for external, independent access to data for reliable analysis in many cases, but our city spends hundreds of millions per year and we don’t have a data SWAT team to work on these issues for internal planning. Take a look at what New York City does for simple yet powerful data analytics that saves lives, saves money and makes the city safer. This is what smart businesses do to drive better decision making.

Data, in context, with local knowledge and experience, evidence based practices (those showing success elsewhere) and a good process will yield smarter decisions for our city.

Data tables do not tell us about any nuances in police stops, we don’t know how these data vary across different neighborhoods nor anything about the actual situations around each stop- the lack of real data that shows incident level activity makes any real understanding impossible.

For example, the data report shows that White stops yield a slightly higher proportion of seizures/recoveries, so logic says why don’t the OPD pull over more White folks if they lead to solid hits at least as often?

Back in 2012 the OPD gave Urban Strategies Council all their stop report data to analyze, but there was no context nor any clear path of analysis suggested making it near impossible to produce thoughtful results, nor was it part of our actual contract. But the data exist and should be used by the city to really understand how our police operate, the context of their work and the patterns that lead to meaningful impacts rather than habits that are not reflected upon and never questioned or changed.

it is not our cities job to just do the work, process the paperwork and never objectively review meta level issues. According to our Mayor “Moving forward, police will be issuing similar reports twice a year”. We need data geeks in city hall to support our police and all departments and in 2014 we need to be better than data reports that consist of a set of summary tables alone. Pivot tables are not enough for public policy.

If you’re still reading- the same problem arises with relation to the Shot Spotter situation- the Chief doesn’t think it’s worth the money, but our Mayor and CMs want to keep it- we now have the data available for the public but we’ve not really had any objective evaluation of the systems utility for OPD use- and we’ve certainly not had a conversation in public about the potential benefits of public access to this data in more like real time! Just looking at the horrendous reality of shootings in East Oakland over the past five years makes one pause very somberly when considering how much the OPD must deal with and how much they need more analytical guidance to do their jobs better and more efficiently.

For a crazy look at shootings by month for these five years take a look at this animation– with the caveat that not all the city had sensors installed the whole time and that on holidays a lot of incidents in the data are likely fireworks! Makes me want to know why there is a small two block section of East Oakland with no gunshots in five years- the data have been fuzzed to be accurate to no more than 100 feet but this still looks like an oasis- who knows why?

Given OPD’s recent suggestion that they want to ditch the Shot Spotter system and given the data are available, it seems worthwhile to start digging into the data to see what use they may have, starting with public benefits. This map is a really simple visualization of the shots from January to October of 2013. At city level it becomes a mess, but at neighborhood level it is far more revealing. Data in web friendly formats are available here also.

You can view it fullscreen here.

To see the areas of the city formally covered by this system use these [ugly] maps.

Traditional public information seeks to meet minimal legal requirements, maintain power inside institutions, discourage dissent, and deliver a pre-determined result. Community engagement seeks a higher reward by respecting the power, wisdom and experience of residents, and engaging them in decision-making – the consent of the governed.

/* Style Definitions */

table.MsoNormalTable

{mso-style-name:”Table Normal”;

mso-tstyle-rowband-size:0;

mso-tstyle-colband-size:0;

mso-style-noshow:yes;

mso-style-priority:99;

mso-style-qformat:yes;

mso-style-parent:””;

mso-padding-alt:0in 5.4pt 0in 5.4pt;

mso-para-margin-top:0in;

mso-para-margin-right:0in;

mso-para-margin-bottom:10.0pt;

mso-para-margin-left:0in;

line-height:115%;

mso-pagination:widow-orphan;

font-size:11.0pt;

font-family:”Calibri”,”sans-serif”;

mso-ascii-font-family:Calibri;

mso-ascii-theme-font:minor-latin;

mso-fareast-font-family:”Times New Roman”;

mso-fareast-theme-font:minor-fareast;

mso-hansi-font-family:Calibri;

mso-hansi-theme-font:minor-latin;}

A sneak preview of my favorite line from our forthcoming report on the efforts of the Oakland Votes Coalition in 2013! Prose from Sharon Cornu.

There’s a frustrating but worthwhile read over at sf.streetsblog on the city’s decision to close down part of the Latham Sqaure pilot in downtown Oakland. The pilot was meant to last for six months and is being partly shelved after just six weeks. This is another sad example of bad use of data, closed decision making and poor engagement in our city.

Problem # 1:

Planning Director Rachel Flynn, when asked for data on Latham Square’s use, said, “We don’t know how to measure pedestrian and bicycle activity.”

This is 2013 and with the powers of Google at our fingertips (yes, despite the clunky computers in city hall they still can get on to the internets). There are two stupidly simple options should this have been something our city staff actually wanted to do- to understand the problem or the situation. We could have worked with local hackers to build simple, cheap sensors using Raspberry Pi devices and off the shelf sensors- read how here. Or we could have simply paid for a small pilot using the super clever MotionLoft system built in SF that is aimed at helping retail businesses understand pedestrian flow and patterns.

No data is not a situation that is acceptable in this century. No data simply suggests we don’t care enough to gather it. It says that facts are not really what matter, it’s all about perception and personal opinion. No data cannot be adequately challenged or debated. Data are not everything, but no data are dangerous.

Problem #2:

When you hear an official say something like “we were kind of hearing the same thing over and over” you should be skeptical. Especially when you have people representing significantly sized local organizations stating that they have heard almost nothing but differing opinions to those proffered by city staff. This problem breaks down into two sub-issues. Firstly, the type of engagement common in our planning dept and the city in general- a couple of town hall meetings which tend to attract squeaky wheels who are in opposition to most projects and are only scheduled to suit a small percentage of the community. In person alone is not a sufficient form of engagement given how digital our community largely is. Secondly, there is little opportunity to really test this statement- the meetings don’t have nicely recorded videos to replay the conversations and oppositions and the city is not maintaining an online discussion on the pros and cons of this project. We have no record of these complaints within easy reach.

So what?

It’s disappointing that in a city that desperately lacks any innovation or experimentation, we cancel one of the few creative place based projects so fast. When the rationale to end the project is that it was "prompted by negative feedback… What we’ve heard from property owners and businesses is they need that access” for cars, it’s hard not to wonder if that is the best approach to civic decision making.

Almost no project or idea in Oakland goes without its critics- if we shut down every experiment to improve our city with no data to objectively measure the impact and if we continue to fail to leverage online communities for ideation and constructive feedback, we are doomed to remain a city under-invested in itself and its future.

If you love the current (well, former) plaza, you can sign the WOBO petition.